/ README.md

README.md

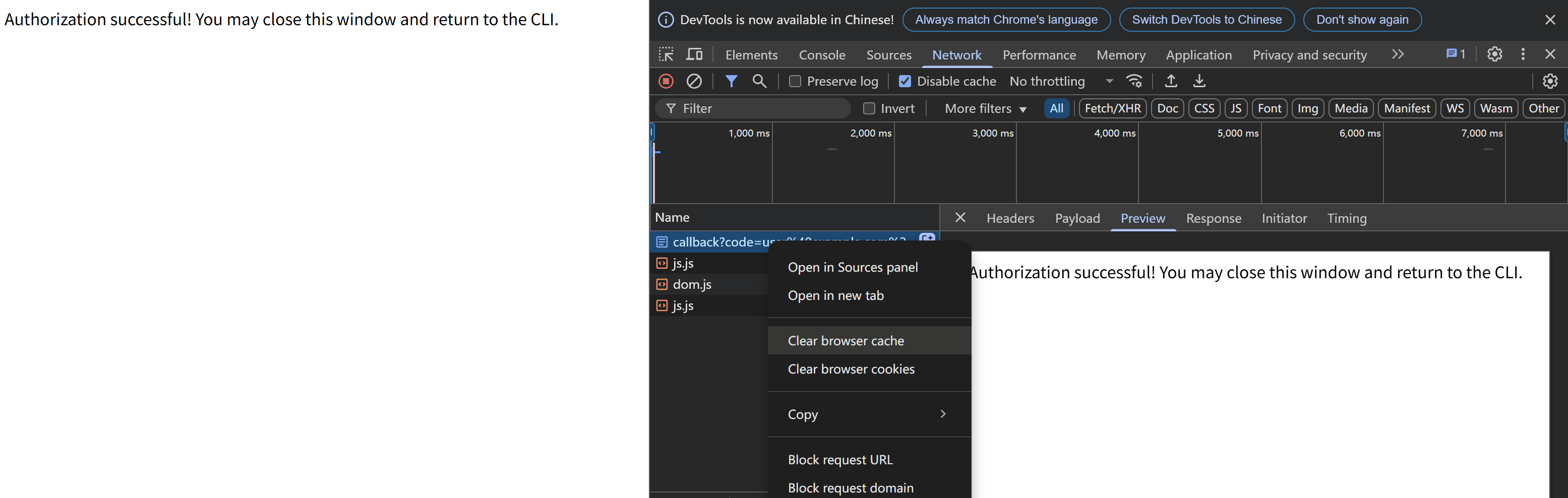

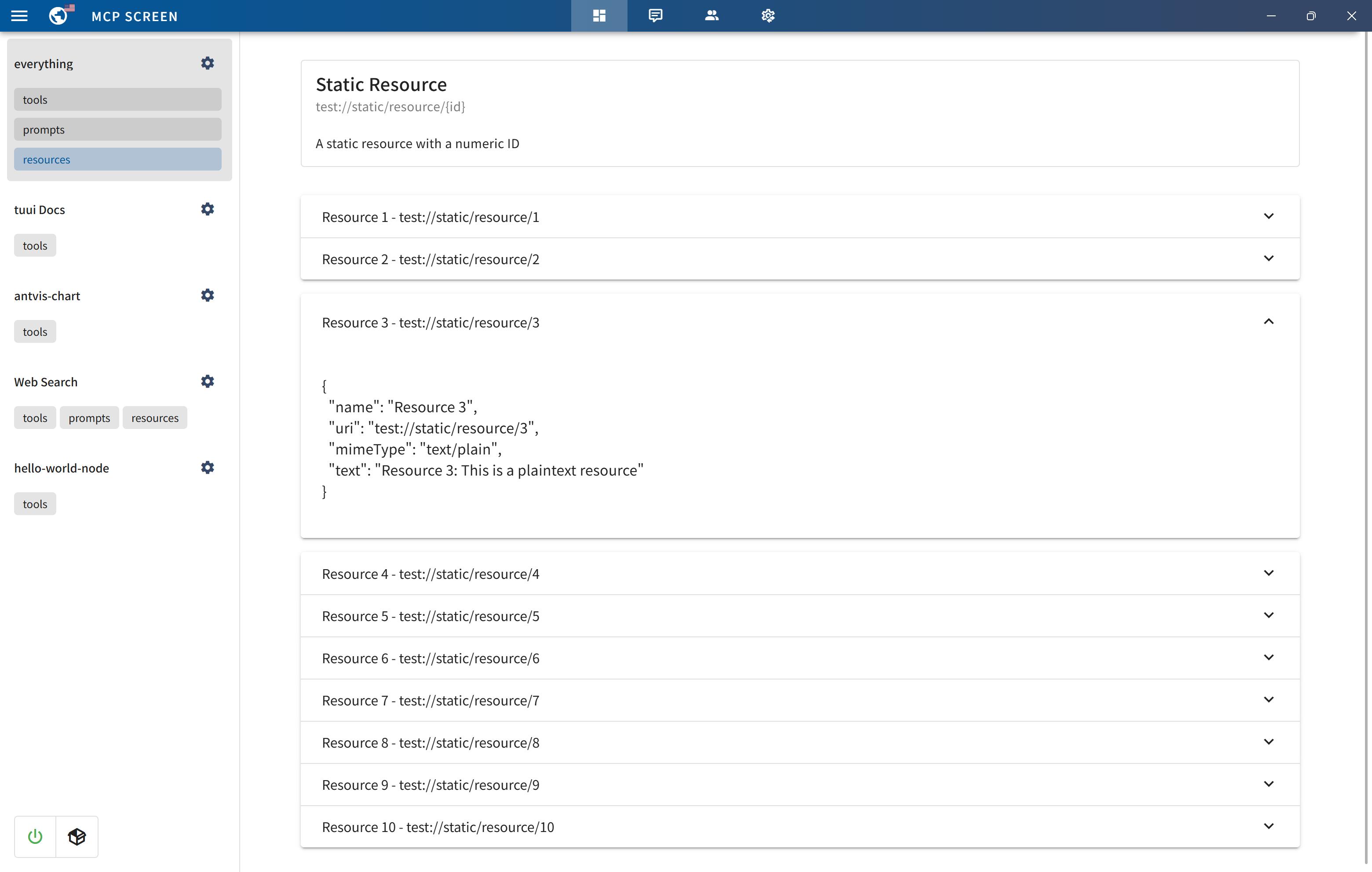

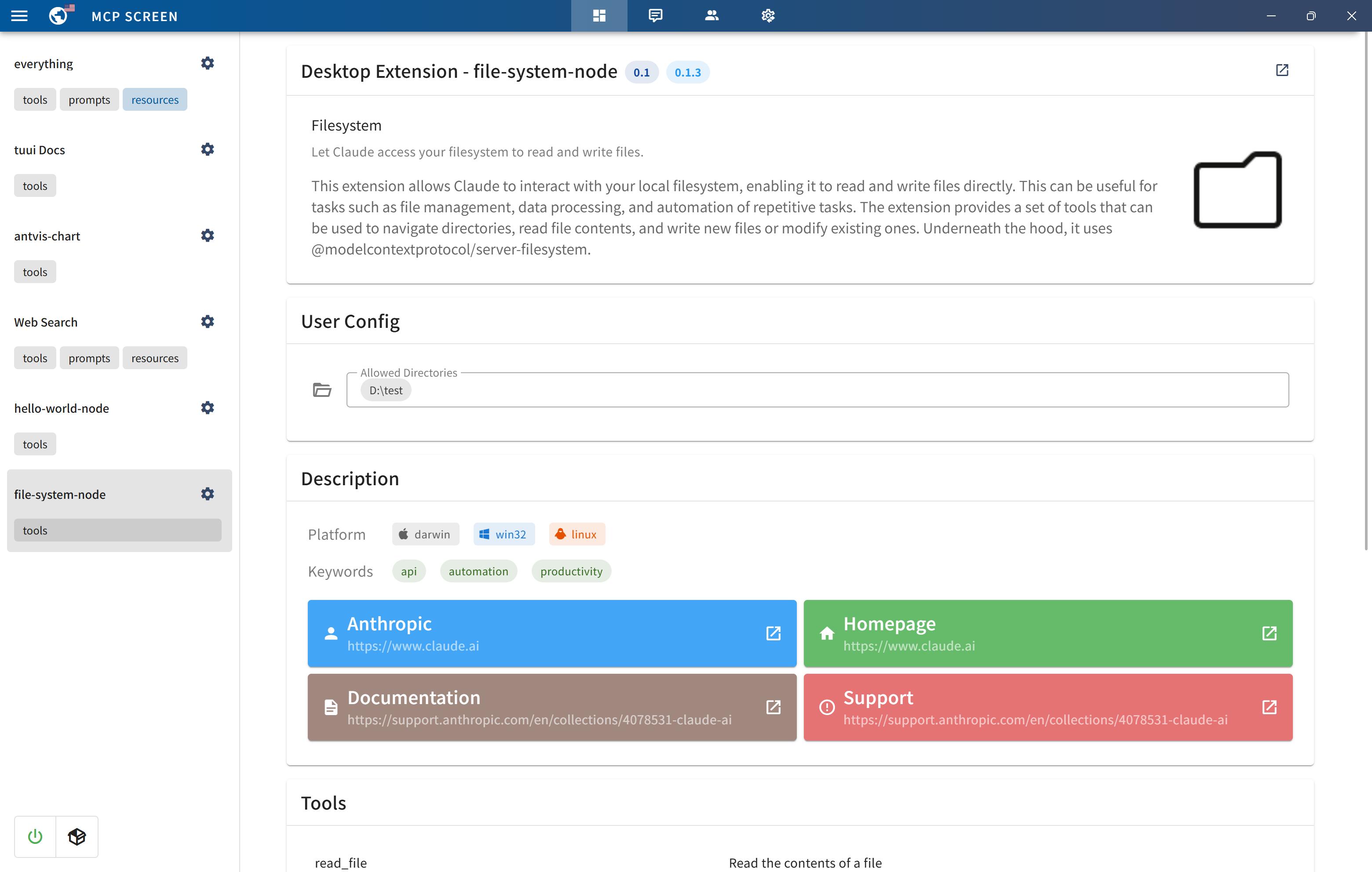

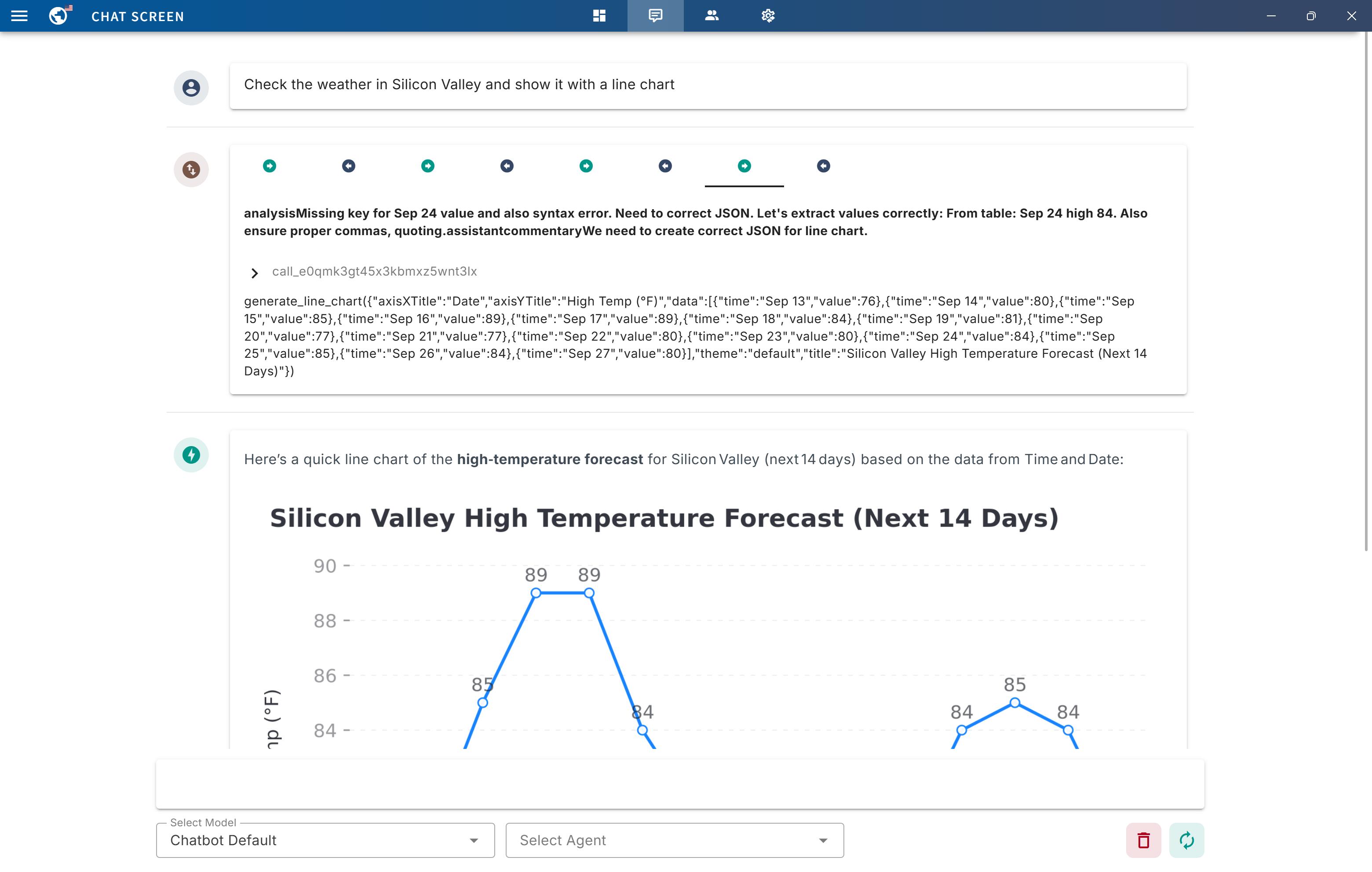

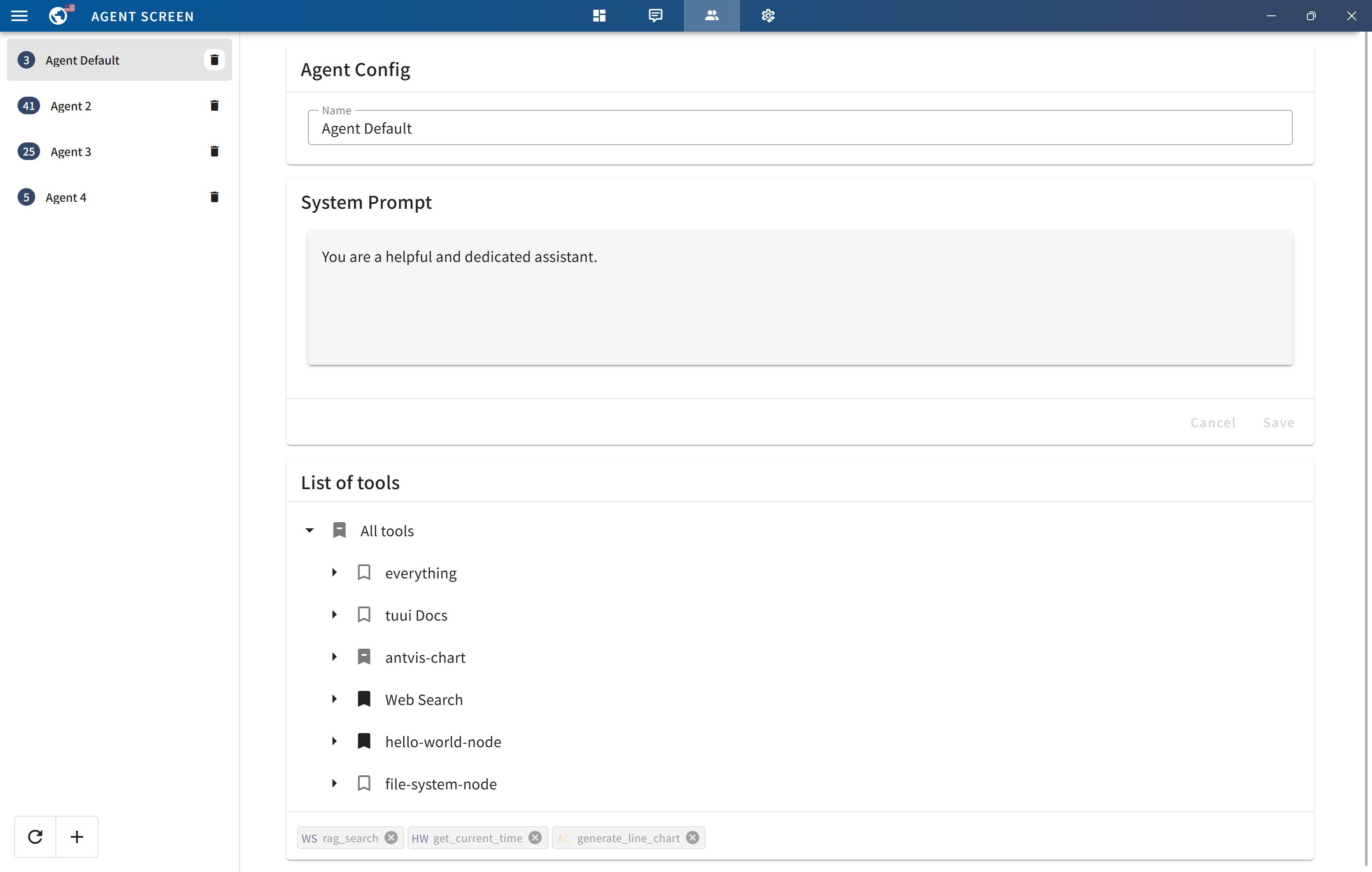

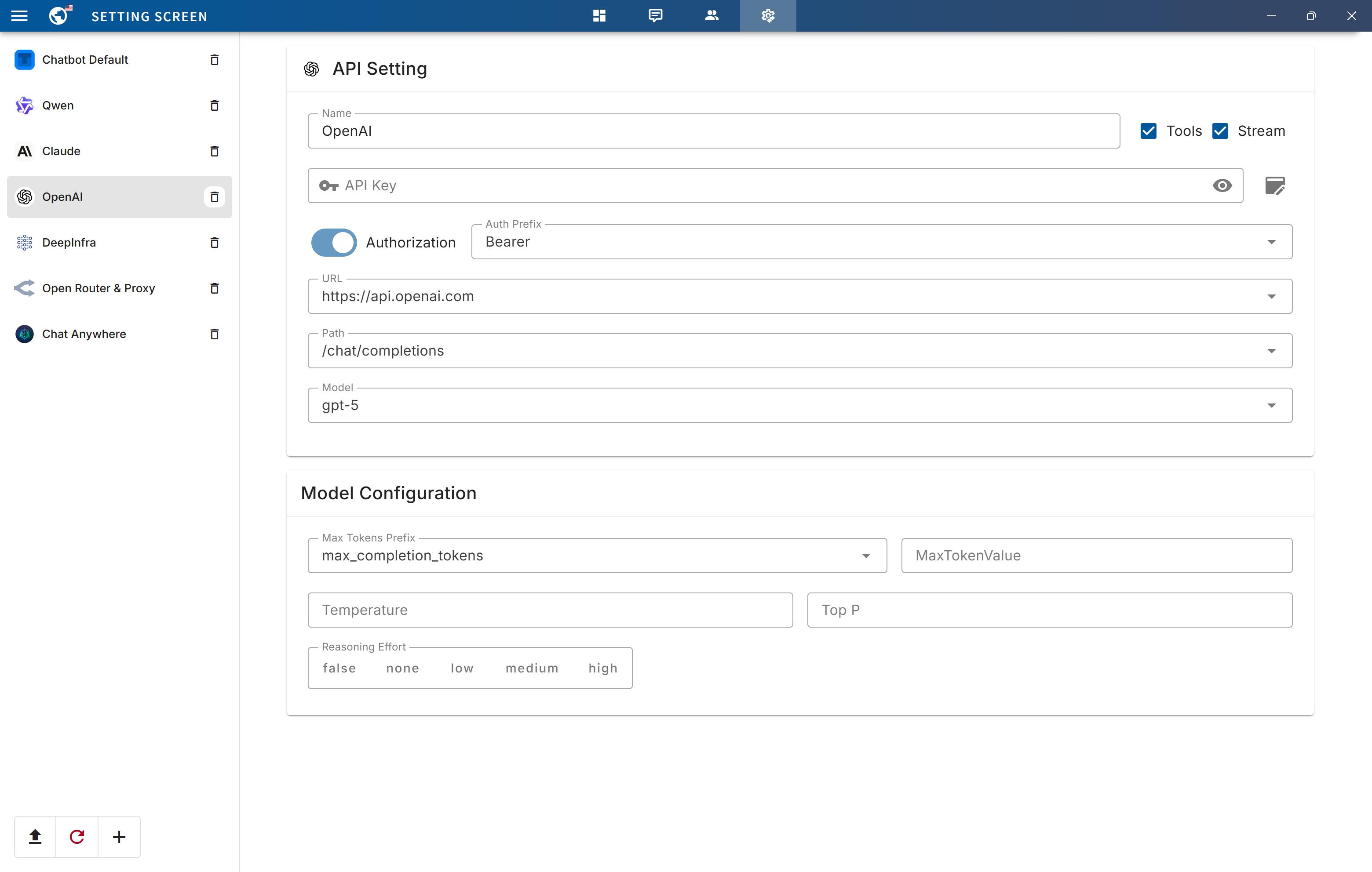

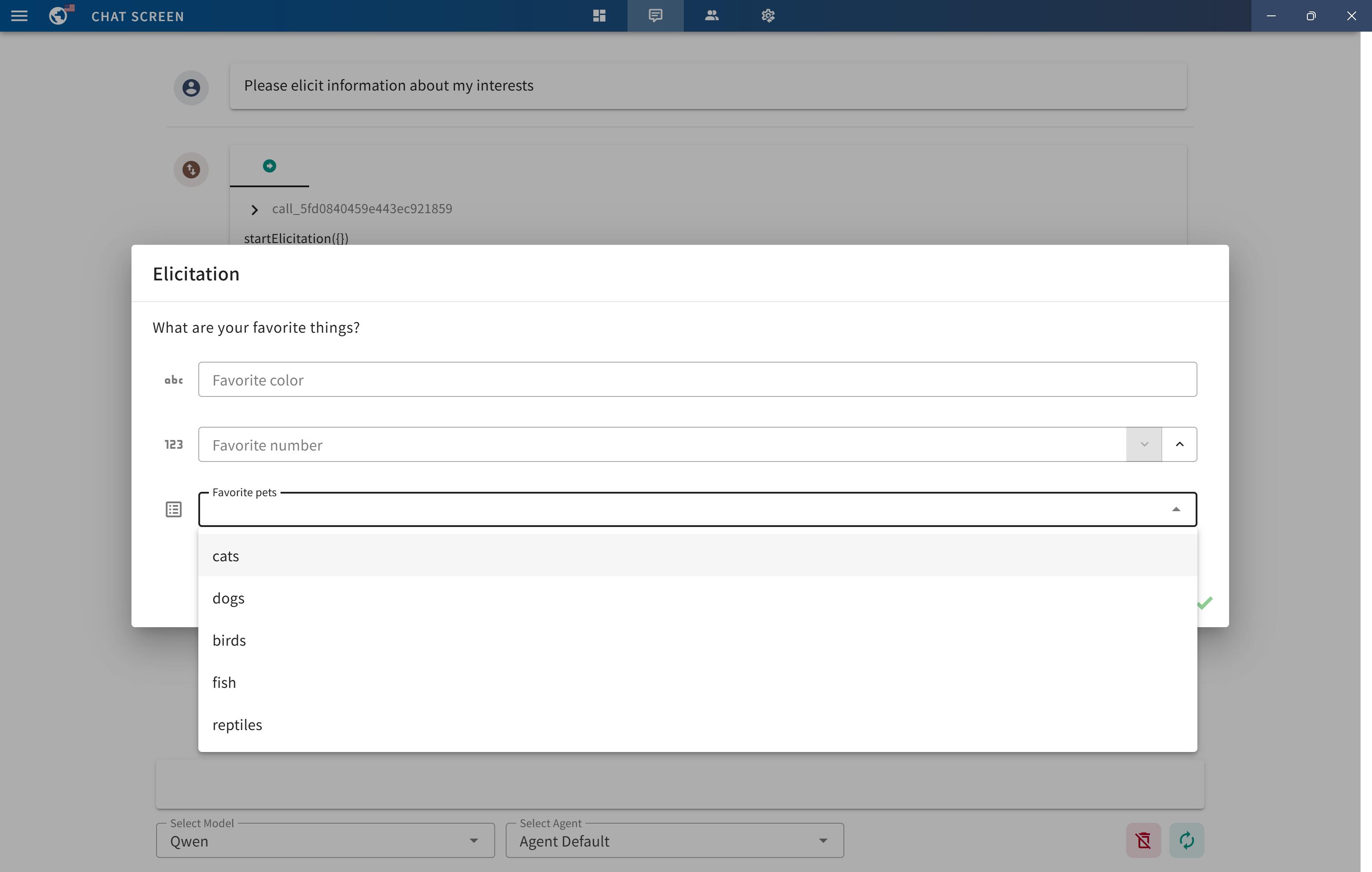

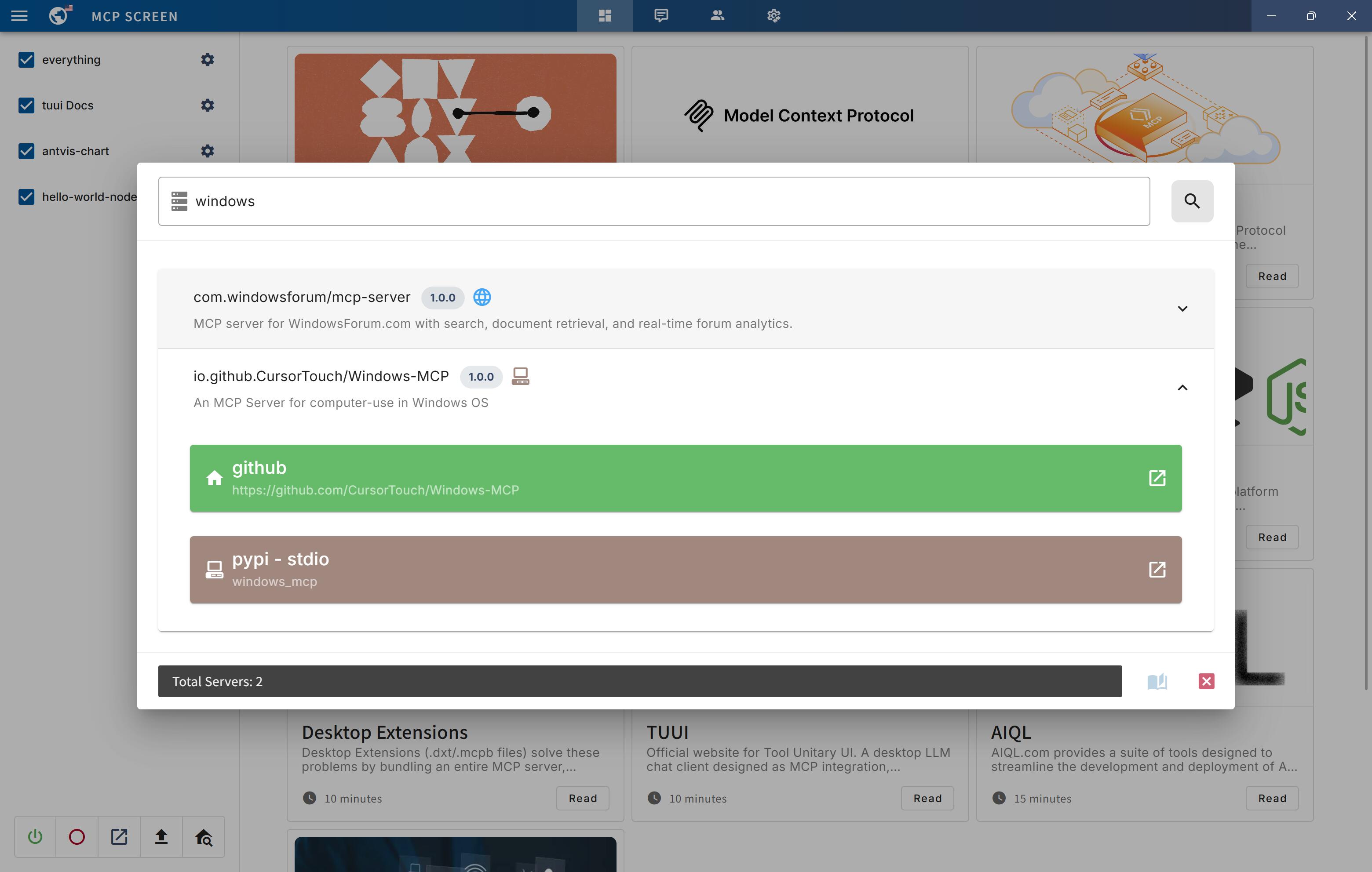

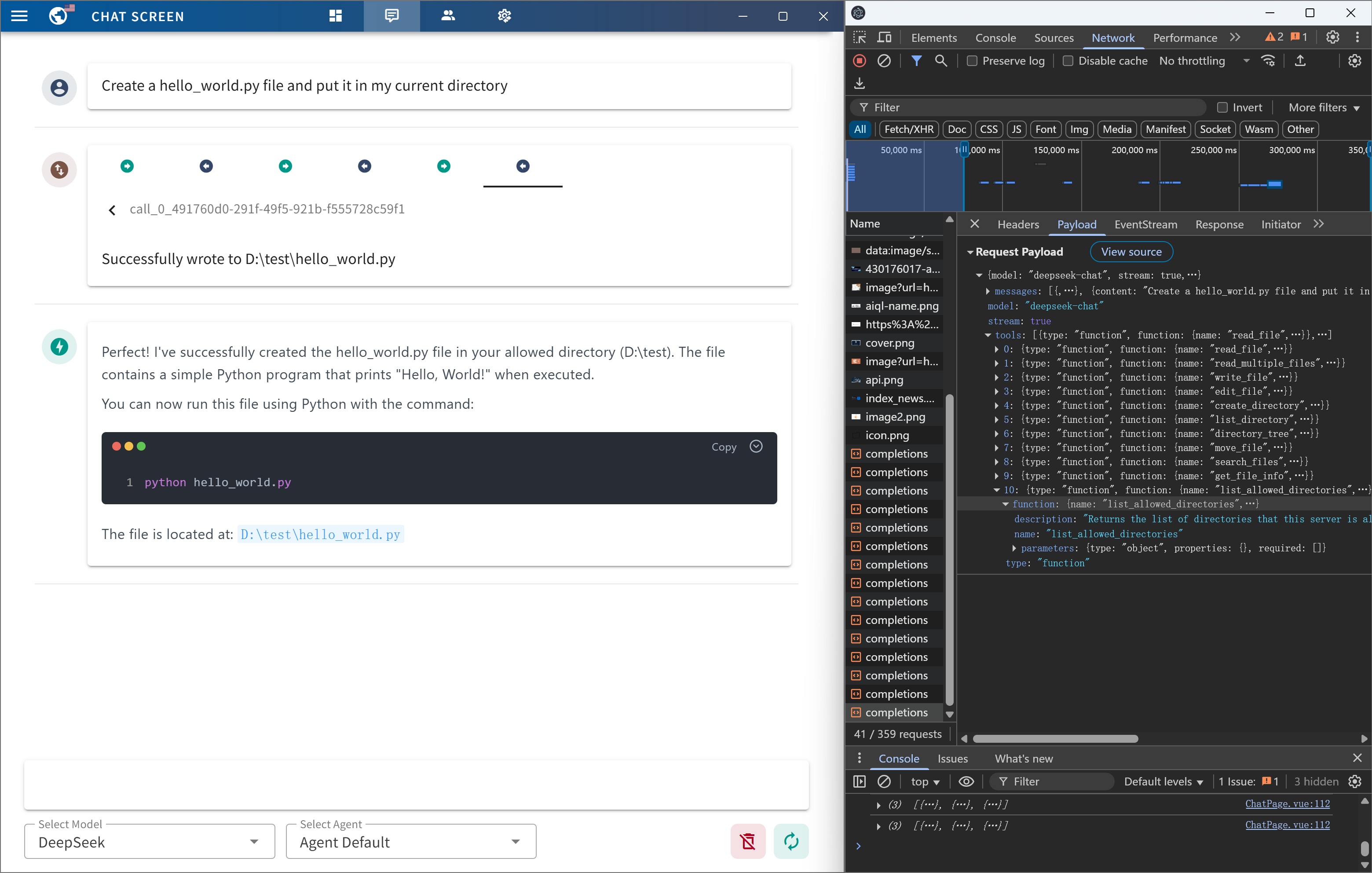

1 # <img src="https://cdn.jsdelivr.net/gh/AI-QL/tuui@main/buildAssets/icons/icon.png" alt="Logo" width="24"/> TUUI - Local AI Playground with MCP 2 3 #### TUUI is a desktop MCP client designed as a tool unitary utility integration, accelerating AI adoption through the Model Context Protocol (MCP) and enabling cross-vendor LLM API orchestration. 4 5 ## `Zero accounts` `Full control` `Open source` `Download and Run` 6 7 [](https://github.com/AI-QL/tuui/releases/latest) [](https://github.com/AI-QL/tuui/releases/latest) [](https://github.com/AI-QL/tuui/releases/latest) 8 9 ## 📜 Introduction 10 11 [](https://modelcontextprotocol.io/clients#aiql-tuui) [](https://vuejs.org) [](https://vuetifyjs.com) [](https://github.com/AI-QL/tuui/blob/main/LICENSE) [](https://deepwiki.com/AI-QL/tuui) 12 13 This repository is essentially an **LLM chat desktop application based on MCP**. It also represents a bold experiment in **creating a complete project using AI**. Many components within the project have been directly converted or generated from the prototype project through AI. 14 15 Given the considerations regarding the quality and safety of AI-generated content, this project employs strict syntax checks and naming conventions. Therefore, for any further development, please ensure that you use the linting tools I've set up to check and automatically fix syntax issues. 16 17 ## ✨ Highlights 18 19 - ✨ Accelerate AI tool integration via MCP 20 - ✨ Orchestrate cross-vendor LLM APIs through dynamic configuring 21 - ✨ Automated application testing Support 22 - ✨ TypeScript support 23 - ✨ Multilingual support 24 - ✨ Basic layout manager 25 - ✨ Global state management through the Pinia store 26 - ✨ Quick support through the GitHub community and official documentation 27 28 ## 🔥 MCP features 29 30 | Status | Feature | Category | Note | 31 | --- | --- | --- | --- | 32 | ✅ | [Tools](https://modelcontextprotocol.io/specification/latest/server/tools) | Server | | 33 | ✅ | [Prompts](https://modelcontextprotocol.io/specification/latest/server/prompts) | Server | | 34 | ✅ | [Resources](https://modelcontextprotocol.io/specification/latest/server/resources) | Server | | 35 | 🔲 | [Roots](https://modelcontextprotocol.io/specification/latest/client/roots) | Client | This is generally only used for the Vibe Coding IDE and can typically be configured through server environment variables. | 36 | ✅ | [Sampling](https://modelcontextprotocol.io/specification/latest/server/sampling) | Client | | 37 | ✅ | [Elicitation](https://modelcontextprotocol.io/specification/latest/server/elicitation) | Client | | 38 | ✅ | [Discovery](https://github.com/modelcontextprotocol/registry) | Registry | Provides real-time MCP server discovery on the MCP registry | 39 | ✅ | [MCPB](https://github.com/modelcontextprotocol/mcpb) | Extension | MCP Bundles (.mcpb) is the new name for what was previously known as Desktop Extensions (.dxt) | 40 41 ## 🚀 Getting Started 42 43 You can quickly get started with the project through a variety of options tailored to your role and needs: 44 45 - To `explore` the project, visit the wiki page: [TUUI.com](https://www.tuui.com) 46 47 - To `download` and use the application directly, go to the releases page: [Releases](https://github.com/AI-QL/tuui/releases/latest) 48 49 - For `developer` setup, refer to the installation guide: [Getting Started (English)](docs/src/en/installation-and-build/getting-started.md) | [快速入门 (中文)](docs/src/zhHans/installation-and-build/getting-started.md) 50 51 - To `ask the AI` directly about the project, visit: [TUUI@DeepWiki](https://deepwiki.com/AI-QL/tuui) 52 53 ## ⚙️ Core Requirements 54 55 **To use MCP-related features, ensure the following preconditions are met for your environment:** 56 57 - Set up an LLM backend (e.g., `ChatGPT`, `Claude`, `Qwen` or self-hosted) that supports tool/function calling. 58 59 - For NPX/NODE-based servers: Install `Node.js` to execute JavaScript/TypeScript tools. 60 61 - For UV/UVX-based servers: Install `Python` and the `UV` library. 62 63 - For Docker-based servers: Install `DockerHub`. 64 65 - For macOS/Linux systems: Modify the default MCP configuration (e.g., adjust CLI paths or permissions). 66 > Refer to the [MCP Server Issue](#mcp-server-issue) documentation for guidance 67 68 For guidance on configuring the LLM, refer to the template(i.e.: Qwen): 69 70 ```json 71 { 72 "name": "Qwen", 73 "apiKey": "", 74 "url": "https://dashscope.aliyuncs.com/compatible-mode", 75 "path": "/v1/chat/completions", 76 "model": "qwen-turbo", 77 "modelList": ["qwen-turbo", "qwen-plus", "qwen-max"], 78 "maxTokensValue": "", 79 "mcp": true 80 } 81 ``` 82 83 The configuration accepts either a JSON object (for a single chatbot) or a JSON array (for multiple chatbots): 84 85 ```json 86 [ 87 { 88 "name": "Openrouter && Proxy", 89 "apiKey": "", 90 "url": "https://api3.aiql.com", 91 "urlList": ["https://api3.aiql.com", "https://openrouter.ai/api"], 92 "path": "/v1/chat/completions", 93 "model": "openai/gpt-4.1-mini", 94 "modelList": [ 95 "openai/gpt-4.1-mini", 96 "openai/gpt-4.1", 97 "anthropic/claude-sonnet-4", 98 "google/gemini-2.5-pro-preview" 99 ], 100 "maxTokensValue": "", 101 "mcp": true 102 }, 103 { 104 "name": "DeepInfra", 105 "apiKey": "", 106 "url": "https://api.deepinfra.com", 107 "path": "/v1/openai/chat/completions", 108 "model": "Qwen/Qwen3-32B", 109 "modelList": [ 110 "Qwen/Qwen3-32B", 111 "Qwen/Qwen3-235B-A22B", 112 "meta-llama/Meta-Llama-3.1-70B-Instruct" 113 ], 114 "mcp": true 115 } 116 ] 117 ``` 118 119 ### 📕 Additional Configuration 120 121 | Configuration | Description | Location | Note | 122 | --- | --- | --- | --- | 123 | LLM Endpoints | Default LLM Chatbots config | [llm.json](/src/main/assets/config/llm.json) | Full config types could be found in [llm.d.ts](/src/preload/llm.d.ts) | 124 | MCP Servers | Default MCP servers configs | [mcp.json](/src/main/assets/config/mcp.json) | For configuration syntax, see [MCP Servers](https://github.com/modelcontextprotocol/servers?tab=readme-ov-file#using-an-mcp-client) | 125 | Startup Screen | Default News on Startup Screen | [startup.json](/src/main/assets/config/startup.json) | | 126 | Popup Screen | Default Prompts on Startup Screen | [popup.json](/src/main/assets/config/popup.json) | | 127 128 For the decomposable package, you can also modify the default configuration of the built release: 129 130 For example, `src/main/assets/config/llm.json` will be located in `resources/assets/config/llm.json` 131 132 Once you modify or import the configurations, it will be stored in your `localStorage` by default. 133 134 Alternatively, you can clear all configurations from the `Tray Menu` by selecting `Clear Storage`. 135 136 ## 🌐 Remote MCP server 137 138 You can utilize Cloudflare's recommended [mcp-remote](https://github.com/geelen/mcp-remote) to implement the full suite of remote MCP server functionalities (including Auth). For example, simply add the following to your [mcp.json](src/main/assets/config/mcp.json) file: 139 140 ```json 141 { 142 "mcpServers": { 143 "cloudflare": { 144 "command": "npx", 145 "args": ["-y", "mcp-remote", "https://YOURDOMAIN.com/sse"] 146 } 147 } 148 } 149 ``` 150 151 In this example, I have provided a test remote server: `https://YOURDOMAIN.com` on [Cloudflare](https://blog.cloudflare.com/remote-model-context-protocol-servers-mcp/). This server will always approve your authentication requests. 152 153 If you encounter any issues (please try to maintain OAuth auto-redirect to prevent callback delays that might cause failures), such as the common HTTP 400 error. You can resolve them by clearing your browser cache on the authentication page and then attempting verification again: 154 155  156 157 <a id="mcp-server-issue"></a> 158 159 ## 🚸 MCP Server Issue 160 161 ### General 162 163 When launching the MCP server, if you encounter any issues, first ensure that the corresponding command can run on your current system — for example, `uv`/`uvx`, `npx`, etc. 164 165 ### ENOENT Spawn Errors 166 167 When launching the MCP server, if you encounter spawn errors like `ENOENT`, try running the corresponding MCP server locally and invoking it using an absolute path. 168 169 If the command works but MCP initialization still returns spawn errors, this may be a known issue: 170 171 - **Windows**: The MCP SDK includes a workaround specifically for `Windows` systems, as documented in [ISSUE 101](https://github.com/modelcontextprotocol/typescript-sdk/issues/101). 172 173 > Details: [ISSUE 40 - MCP servers fail to connect with npx on Windows](https://github.com/modelcontextprotocol/servers/issues/40) (fixed) 174 175 - **mscOS**: The issue remains unresolved on other platforms, specifically `macOS`. Although several workarounds are available, this ticket consolidates the most effective ones and highlights the simplest method: [How to configure MCP on macOS](https://github.com/AI-QL/tuui/issues/2). 176 177 > Details: [ISSUE 64 - MCP Servers Don't Work with NVM](https://github.com/modelcontextprotocol/servers/issues/64) (still open) 178 179 ### MCP Connection Timeout 180 181 If initialization takes too long and triggers the 90-second timeout protection, it may be because the `uv`/`uvx`/`npx` runtime libraries are being installed or updated for the first time. 182 183 When your connection to the respective `pip` or `npm` repository is slow, installation can take a long time. 184 185 In such cases, first complete the installation manually with `pip` or `npm` in the relevant directory, and then start the MCP server again. 186 187 ## 🤝 Contributing 188 189 We welcome contributions of any kind to this project, including feature enhancements, UI improvements, documentation updates, test case completions, and syntax corrections. I believe that a real developer can write better code than AI, so if you have concerns about certain parts of the code implementation, feel free to share your suggestions or submit a pull request. 190 191 Please review our [Code of Conduct](CODE_OF_CONDUCT.md). It is in effect at all times. We expect it to be honored by everyone who contributes to this project. 192 193 For more information, please see [Contributing Guidelines](CONTRIBUTING.md) 194 195 ## 🙋 Opening an Issue 196 197 Before creating an issue, check if you are using the latest version of the project. If you are not up-to-date, see if updating fixes your issue first. 198 199 ### 🔒️ Reporting Security Issues 200 201 Review our [Security Policy](SECURITY.md). Do not file a public issue for security vulnerabilities. 202 203 ## 🎉 Demo 204 205 ### MCP primitive visualization 206 207  208 209 ### MCP bundles (.mcpb) support 210 211  212 213 ### MCP Tool call tracing 214 215  216 217 ### Agent with specified tool selection 218 219  220 221 ### LLM API setting 222 223  224 225 ### MCP elicitation 226 227  228 229 ### MCP Registry 230 231  232 233 ### Native devtools 234 235  236 237 ## 🙏 Credits 238 239 Written by [@AIQL.com](https://github.com/AI-QL). 240 241 Many of the ideas and prose for the statements in this project were based on or inspired by work from the following communities: 242 243 - [Specifications and References](https://www.tuui.com/project-structures/specification-references) 244 245 You can review the specific technical details and the license. We commend them for their efforts to facilitate collaboration in their projects.