README.md

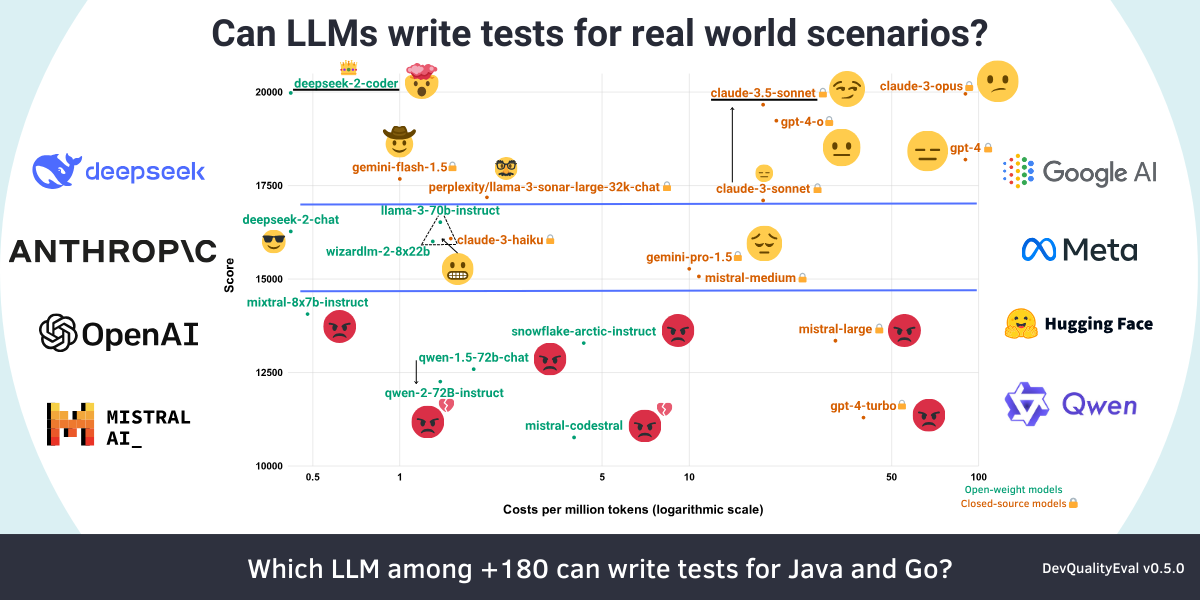

1 # DevQualityEval 2 3 An evaluation benchmark 📈 and framework to compare and evolve the quality of code generation of LLMs. 4 5 This repository gives developers of LLMs (and other code generation tools) a standardized benchmark and framework to improve real-world usage in the software development domain and provides users of LLMs with metrics and comparisons to check if a given LLM is useful for their tasks. 6 7 The [latest results](docs/reports/v0.5.0) are discussed in a deep dive: [DeepSeek v2 Coder and Claude 3.5 Sonnet are more cost-effective at code generation than GPT-4o!](https://symflower.com/en/company/blog/2024/dev-quality-eval-v0.5.0-deepseek-v2-coder-and-claude-3.5-sonnet-beat-gpt-4o-for-cost-effectiveness-in-code-generation/) 8 9  10 11 ## Installation 12 13 [Install Git](https://git-scm.com/downloads), [install Go](https://go.dev/doc/install), and then execute the following commands: 14 15 ```bash 16 git clone https://github.com/symflower/eval-dev-quality.git 17 cd eval-dev-quality 18 go install -v github.com/symflower/eval-dev-quality/cmd/eval-dev-quality 19 ``` 20 21 You can now use the `eval-dev-quality` binary to [execute the benchmark](#usage). 22 23 ## Usage 24 25 > REMARK This project does not execute the LLM generated code in a sandbox by default. Make sure that you are running benchmarks only inside of an isolated environment, e.g. by using `--runtime docker`. 26 27 The easiest-to-use LLM provider is [openrouter.ai](https://openrouter.ai/). You need to create an [access key](https://openrouter.ai/keys) and save it in an environment variable: 28 29 ```bash 30 export PROVIDER_TOKEN=openrouter:${your-key} 31 ``` 32 33 Then you can run all benchmark tasks on all models and repositories: 34 35 ```bash 36 eval-dev-quality evaluate 37 ``` 38 39 The output of the commands is a detailed log of all the requests and responses to the models and of all the commands executed. After the execution, you can find the final result saved to the file `evaluation.csv`. 40 41 See `eval-dev-quality --help` and especially `eval-dev-quality evaluate --help` for options. 42 43 ### Example usage: Evaluate only one or more models 44 45 In the case you only want to evaluate only one or more models you can use the `--model` option to define a model you want to use. You can use this option with as many models as you want. 46 47 Executing the following output: 48 49 ```bash 50 eval-dev-quality evaluate --model=openrouter/meta-llama/llama-3-70b-instruct 51 ``` 52 53 Should return an evaluation log similar to this: 54 55 <details> 56 <summary>Log for the above command.</summary> 57 58 ````plain 59 2024/05/02 10:01:58 Writing results to evaluation-2024-05-02-10:01:58 60 2024/05/02 10:01:58 Checking that models and languages can be used for evaluation 61 2024/05/02 10:01:58 Evaluating model "openrouter/meta-llama/llama-3-70b-instruct" using language "golang" and repository "golang/plain" 62 2024/05/02 10:01:58 Querying model "openrouter/meta-llama/llama-3-70b-instruct" with: 63 Given the following Go code file "plain.go" with package "plain", provide a test file for this code. 64 The tests should produce 100 percent code coverage and must compile. 65 The response must contain only the test code and nothing else. 66 67 ```golang 68 package plain 69 70 func plain() { 71 return // This does not do anything but it gives us a line to cover. 72 } 73 ``` 74 2024/05/02 10:02:00 Model "openrouter/meta-llama/llama-3-70b-instruct" responded with: 75 ```go 76 package plain 77 78 import "testing" 79 80 func TestPlain(t *testing.T) { 81 plain() 82 } 83 ``` 84 2024/05/02 10:02:00 $ symflower test --language golang --workspace /tmp/eval-dev-quality2330727502/plain 85 Checking for updates 86 There is a new version of symflower available! Please run `symflower update`. 87 === RUN TestPlain 88 --- PASS: TestPlain (0.00s) 89 PASS 90 coverage: 100.0% of statements 91 ok plain 0.002s coverage: 100.0% of statements 92 93 DONE 1 tests in 0.348s 94 2024/05/02 10:02:01 Evaluated model "openrouter/meta-llama/llama-3-70b-instruct" using language "golang" and repository "golang/plain": encountered 0 problems: [] 95 2024/05/02 10:02:01 Evaluating model "openrouter/meta-llama/llama-3-70b-instruct" using language "java" and repository "java/plain" 96 2024/05/02 10:02:01 Querying model "openrouter/meta-llama/llama-3-70b-instruct" with: 97 Given the following Java code file "src/main/java/com/eval/Plain.java" with package "com.eval", provide a test file for this code with JUnit 5 as a test framework. 98 The tests should produce 100 percent code coverage and must compile. 99 The response must contain only the test code and nothing else. 100 101 ```java 102 package com.eval; 103 104 class Plain { 105 static void plain() { 106 } 107 } 108 ``` 109 2024/05/02 10:02:02 Model "openrouter/meta-llama/llama-3-70b-instruct" responded with: 110 ```java 111 package com.eval; 112 113 import org.junit.jupiter.api.Test; 114 115 import static org.junit.jupiter.api.Assertions.assertFalse; 116 import static org.junit.jupiter.api.Assertions.assertTrue; 117 118 public class PlainTest { 119 @Test 120 void testPlain() { 121 Plain.plain(); 122 // Since the method is empty, we can only assert that it doesn't throw an exception 123 assertTrue(true); 124 } 125 } 126 ``` 127 2024/05/02 10:02:02 $ symflower test --language java --workspace /tmp/eval-dev-quality1094965069/plain 128 Total coverage 100.000000% 129 2024/05/02 10:02:09 Evaluated model "openrouter/meta-llama/llama-3-70b-instruct" using language "java" and repository "java/plain": encountered 0 problems: [] 130 2024/05/02 10:02:09 Evaluating models and languages 131 2024/05/02 10:02:09 Evaluation score for "openrouter/meta-llama/llama-3-70b-instruct" ("code-no-excess"): score=12, coverage=2, files-executed=2, response-no-error=2, response-no-excess=2, response-not-empty=2, response-with-code=2 132 ```` 133 134 </details> 135 136 The execution by default also creates a report file `REPORT.md` that contains additional evaluation results and links to individual result files. 137 138 # Containerized use 139 140 ## Notes 141 142 The following parameters do have a special behavior when using a containerized runtime. 143 144 - `--configuration`: Passing configuration files to the docker runtime is currently unsupported. 145 - `--testdata`: The check if the path exists is ignored on the host system but still enforced inside the container because the paths of the host and inside the container might differ. 146 147 ## Docker 148 149 ### Setup 150 151 Ensure that docker is installed on the system. 152 153 ### Build or pull the image 154 155 ```bash 156 docker build . -t eval-dev-quality:dev 157 ``` 158 159 ```bash 160 docker pull ghcr.io/symflower/eval-dev-quality:main 161 ``` 162 163 ### Run the evaluation either with the built or pulled image 164 165 The following command will run the model `symflower/symbolic-execution` and stores the the results of the run inside the local directory `evaluation`. 166 167 ```bash 168 eval-dev-quality evaluate --runtime docker --runtime-image eval-dev-quality:dev --model symflower/symbolic-execution 169 ``` 170 171 Omitting the `--runtime-image` parameter will default to the image from the `main` branch. `ghcr.io/symflower/eval-dev-quality:main` 172 173 ```bash 174 eval-dev-quality evaluate --runtime docker --model symflower/symbolic-execution 175 ``` 176 177 ## Kubernetes 178 179 Please check the [Kubernetes](./docs/kubernetes/README.md) documentation. 180 181 ## Providers 182 183 The following providers (for model inference) are currently supported: 184 185 - [OpenRouter](https://openrouter.ai/) 186 - register API key via: `--tokens=openrouter:${key}` or environment `PROVIDER_TOKEN=openrouter:${key}` 187 - select models via the `openrouter` prefix, i.e. `--model openrouter/meta-llama/llama-3.1-8b-instruct` 188 - [Ollama](https://ollama.com/) 189 - the evaluation listens to the default Ollama port (`11434`), or will attempt to start an Ollama server if you have the `ollama` binary on your path 190 - select models via the `ollama` prefix, i.e. `--model ollama/llama3.1:8b` 191 - [OpenAI API](https://platform.openai.com/docs/api-reference/chat/create) 192 - use any inference endpoint that conforms to the OpenAI chat completion API 193 - register the endpoint using `--urls=custom-${name}:${endpoint-url}` (mind the `custom-` prefix) or using the environment `PROVIDER_URL` 194 - ensure to register your API key to the same `custom-${name}` 195 - select models via the `custom-${name}` prefix 196 - example for [fireworks.ai](https://fireworks.ai/): `eval-dev-quality evaluate --urls=custom-fw:https://api.fireworks.ai/inference/v1 --tokens=custom-fw:${your-api-token} --model custom-fw/accounts/fireworks/models/llama-v3p1-8b-instruct` 197 198 # The Evaluation 199 200 With `DevQualityEval` we answer answer the following questions: 201 202 - Which LLMs can solve software development tasks? 203 - How good is the quality of their results? 204 205 Programming is a non-trivial profession. Even writing tests for an empty function requires substantial knowledge of the used programming language and its conventions. We already investigated this challenge and how many LLMs failed at it in our [first `DevQualityEval` report](https://symflower.com/en/company/blog/2024/can-ai-test-a-go-function-that-does-nothing/#why-evaluate-an-empty-function). This highlights the need for a **benchmarking framework for evaluating AI performance on software development task solving**. 206 207 ## Setup 208 209 The models evaluated in `DevQualityEval` have to solve programming tasks, not only in one, but in multiple programming languages. 210 Every task is a well-defined, abstract challenge that the model needs to complete (for example: writing a unit test for a given function). 211 Multiple concrete cases (or candidates) exist for a given task that each represent an actual real-world example that a model has to solve (i.e. for function `abc() {...` write a unit test). 212 213 Completing a task-case rewards points depending on the quality of the result. This, of course, depends on which criteria make the solution to a task a "good" solution, but the general rule is that the more points - the better. For example, the unit tests generated by a model might actually be compiling, yielding points that set the model apart from other models that generate only non-compiling code. 214 215 ### Running specific tasks 216 217 ### Via repository 218 219 Each repository can contain a configuration file `repository.json` in its root directory specifying a list of tasks which are supposed to be run for this repository. 220 221 ```json 222 { 223 "tasks": ["write-tests"] 224 } 225 ``` 226 227 For the evaluation of the repository only the specified tasks are executed. If no `repository.json` file exists, all tasks are executed. 228 229 ## Tasks 230 231 ### Task: Test Generation 232 233 Test generation is the task of generating a test suite for a given source code example. 234 235 The great thing about test generation is that it is easy to automatically check if the result is correct. 236 It needs to compile and provide 100% coverage. A model can only write such tests if it understands the source, so implicitly we are evaluating the language understanding of a LLM. 237 238 On a high level, `DevQualityEval` asks the model to produce tests for an example case, saves the response to a file and tries to execute the resulting tests together with the original source code. 239 240 #### Cases 241 242 Currently, the following cases are available for this task: 243 244 - Java 245 - [`java/plain`](testdata/java/plain) 246 - [`java/light`](testdata/java/light) 247 - Go 248 - [`golang/plain`](testdata/golang/plain) 249 - [`golang/light`](testdata/golang/light) 250 - Ruby 251 - [`ruby/plain`](testdata/ruby/plain) 252 - [`ruby/light`](testdata/ruby/light) 253 254 ### Task: Code Repair 255 256 Code repair is the task of repairing source code with compilation errors. 257 258 For this task, we introduced the `mistakes` repository, which includes examples of source code with compilation errors. Each example is isolated in its own package, along with a valid test suite. We compile each package in the `mistakes` repository and provide the LLM's with both the source code and the list of compilation errors. The LLM's response is then validated with the predefined test suite. 259 260 #### Cases 261 262 Currently, the following cases are available for this task: 263 264 - Java 265 - [`java/mistakes`](testdata/java/mistakes) 266 - Go 267 - [`golang/mistakes`](testdata/golang/mistakes) 268 - Ruby 269 - [`golang/mistakes`](testdata/ruby/mistakes) 270 271 ### Task: Transpile 272 273 Transpile is the task of converting source code from one language to another. 274 275 The test data repository for this task consists of several packages. At the root of each package, there must be a folder called `implementation` containing the **implementation files** (one per origin language), which are to be transpiled from. Each package must also contain a source file with a stub (a function signature so the LLM's know the signature of the function they need to generate code for) and a valid test suite. The LLM's response is then validated with the predefined test suite. 276 277 #### Cases 278 279 Currently, the following cases are available for this task: 280 281 - Java 282 - [`java/transpile`](testdata/java/transpile) 283 - Go 284 - [`golang/transpile`](testdata/golang/transpile) 285 - Ruby 286 - [`golang/transpile`](testdata/ruby/transpile) 287 288 ### Reward Points 289 290 Currently, the following points are awarded: 291 292 - `response-no-error`: `+1` if the response did not encounter an error 293 - `response-not-empty`: `+1` if the response is not empty 294 - `response-with-code`: `+1` if the response contained source code 295 - `compiled`: `+1` if the source code compiled 296 - `statement-coverage-reached`: `+10` for each coverage object of executed code (disabled for `transpile` and `code-repair`, as models are writing the implementation code and could just add arbitrary statements to receive a higher score) 297 - `no-excess`: `+1` if the response did not contain more content than requested 298 - `passing-tests`: `+10` for each test that is passing (disabled for `write-test`, as models are writing the test code and could just add arbitrary test cases to receive a higher score) 299 300 ## Results 301 302 When interpreting the results, please keep the following in mind that LLMs are nondeterministic, so results may vary. 303 304 Furthermore, the choice of a "best" model for a task might depend on additional factors. For example, the needed computing resources or query cost of a cloud LLM endpoint differs greatly between models. Also, the open availability of model weights might change one's model choice. So in the end, there is no "perfect" overall winner, only the "perfect" model for a specific use case. 305 306 # How to extend the benchmark? 307 308 If you want to add new files to existing language repositories or new repositories to existing languages, [install the evaluation binary](#installation) of this repository and you are good to go. 309 310 To add new tasks to the benchmark, add features, or fix bugs, you'll need a development environment. The development environment comes with this repository and can be installed by executing `make install-all`. Then you can run `make` to see the documentation for all the available commands. 311 312 # How to contribute? 313 314 First of all, thank you for thinking about contributing! There are multiple ways to contribute: 315 316 - Add more files to existing language repositories. 317 - Add more repositories to languages. 318 - Implement another language and add repositories for it. 319 - Implement new tasks for existing languages and repositories. 320 - Add more features and fix bugs in the evaluation, development environment, or CI: [best to have a look at the list of issues](https://github.com/symflower/eval-dev-quality/issues). 321 322 If you want to contribute but are unsure how: [create a discussion](https://github.com/symflower/eval-dev-quality/discussions) or write us directly at [markus.zimmermann@symflower.com](mailto:markus.zimmermann@symflower.com). 323 324 # When and how to release? 325 326 ## Publishing Content 327 328 Releasing a new version of `DevQualityEval` and publishing content about it are two different things! 329 But, we plan development and releases to be "content-driven", i.e. we work on / add features that are interesting to write about (see "Release Roadmap" below). 330 Hence, for every release we also publish a deep dive blog post with our latest features and findings. 331 Our published content is aimed at giving insight into our work and educating the reader. 332 333 - new features that add value (i.e. change / add to the scoring / ranking) are always publish-worthy / release-worthy 334 - new tools / models are very often not release-worthy but still publish-worthy (i.e. how is new model `XYZ` doing in the benchmark) 335 - insights, learnings, problems, surprises, achieved goals and experiments are always publish-worthy 336 - they need to be documented for later publishing (in a deep-dive blog post) 337 - they can also be published right away already (depending on the nature of the finding) as a small report / post 338 339 ❗ Always publish / release / journal early: 340 341 - if something works, but is not merged yet: publish about it already 342 - if some feature is merged that is release-worthy, but we were planning on adding other things to that release: release anyways 343 - if something else is publish-worth: at least write down a few bullet-points immediately why it is interesting, including examples 344 345 ## Release Roadmap 346 347 The `main` branch is always stable and could theoretically be used to form a new release at any given time. 348 To avoid having hundreds of releases for every merge to `main`, we perform releases only when a (group of) publish-worthy feature(s) or important bugfix(es) is merged (see "Publishing Content" above). 349 350 Therefore, we plan releases in special `Roadmap for vX.Y.Z` issues. 351 Such an issue contains a current list of publish-worthy goals that must be met for that release, a `TODO` section with items not planned for the current but a future release, and instructions on how issues / PRs / tasks need to be groomed and what needs to be done for a release to happen. 352 353 The issue template for roadmap issues can be found at [`.github/ISSUE_TEMPLATE/roadmap.md`](.github/ISSUE_TEMPLATE/roadmap.md)